Key Takeaways

- — 95% of enterprise AI pilots deliver no measurable P&L impact, and 42% of companies scrapped most of their AI initiatives in 2025.

- — The #1 root cause of AI failure isn't bad technology—it's that organizations don't understand the work before they try to automate it.

- — Automating a broken process doesn't fix it—it industrializes the dysfunction at machine speed.

- — The right approach: understand reality, design intent, then automate—never the reverse.

- — The 5% that succeed embed AI into specific, well-understood processes rather than deploying it broadly.

The Mass Delusion

Let's skip the pleasantries.

There's a mass delusion happening in boardrooms worldwide right now. It goes something like this: "We have a process problem. Let's throw AI at it."

That's not a strategy. That's a prayer.

And the data is no longer anecdotal. It's damning.

A July 2025 study from MIT's Project NANDA—based on 300+ initiative reviews, 52 organizational interviews, and 153 senior leader surveys—found that 95% of enterprise AI pilots delivered no measurable P&L impact. Despite $30–40 billion in investment. Five percent are seeing returns. The rest are burning cash.

RAND Corporation's research tells the same story from a different angle: over 80% of AI projects fail—twice the failure rate of non-AI IT projects. And the number one root cause, based on interviews with 65 data scientists and engineers? Organizations misunderstand or miscommunicate what problem actually needs to be solved.

Meanwhile, S&P Global's 2025 survey of over 1,000 enterprises found that 42% of companies scrapped most of their AI initiatives this year—up from 17% just one year prior. The average organization abandoned 46% of proof-of-concepts before they ever reached production.

Read those numbers again. This isn't a technology problem. This is a leadership problem. And it's one that existed long before anyone said the words "large language model" in a strategy meeting.

Think you can describe a simple process clearly enough for a machine to execute? The PBJ Challenge lets you try—instruct a relentlessly literal robot to make a sandwich and see what happens when your assumptions meet zero interpretation. Most people fail. Takes 3 minutes.

AI gave leaders permission to skip the hard work of understanding their own operations. And they took it.

The Thing Nobody Can Describe

Here's what's actually happening inside most organizations attempting AI implementation:

Nobody can describe how work gets done today. Not with any precision. Not in a way that could survive being handed to a machine.

The work lives in people's heads. It survives on tribal knowledge, heroic effort, and a handful of people who compensate for every gap in the system—gaps that were never designed, they just happened. Years of quick fixes, workarounds, and "that's just how we do it" stacked on top of each other until the whole operation runs on institutional muscle memory that no one can articulate.

And now someone in leadership has decided that AI is going to replace all of that.

Think about the arrogance of that for a moment. You can't document what your people do. You can't explain why your process works when it works, or why it breaks when it breaks. But you're confident a machine can figure it out.

That's not innovation. That's negligence.

Industrialized Chaos

This is the part that should terrify every executive with an active AI initiative.

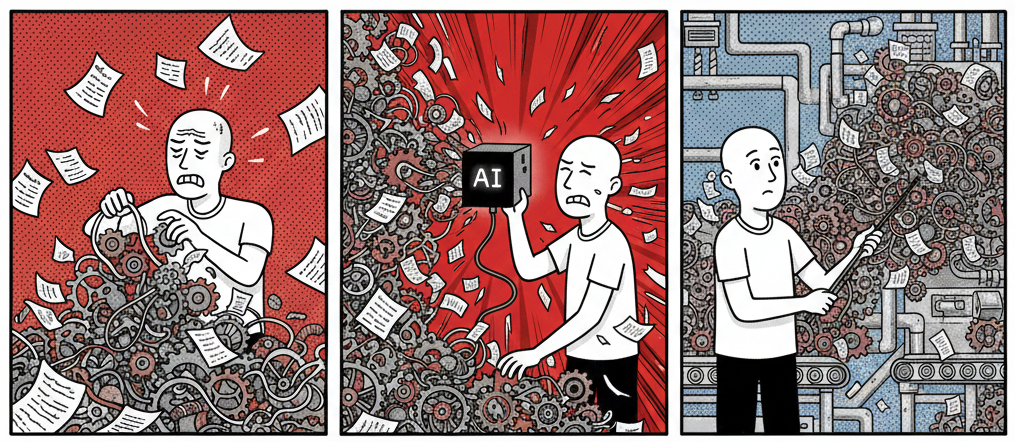

When you point AI at a broken process, you don't get a fixed process. You get a broken process running at machine speed.

AI doesn't replace broken processes. It automates them. Faster. At scale. With nobody who understands why things are breaking.

Every workaround gets encoded. Every inefficiency gets amplified. Every compensating behavior that a human used to catch and correct now flies through the system unchecked, at scale, faster than anyone can intervene.

You haven't solved the problem. You've industrialized it.

And the worst part? You've now made it harder to fix. Because the mess is no longer contained within human judgment and adaptability—it's baked into an automated system that nobody fully understands, running on logic derived from a process nobody could describe in the first place.

That's not a technology failure. That's an operational identity crisis. And no amount of prompt engineering is going to fix it. RAND's researchers put it plainly: "Misunderstandings and miscommunications about the intent and purpose of the project are the most common reasons for AI project failure." Not bad models. Not bad data. Bad understanding of the work itself.

The Numbers Don't Lie

If you think "our AI initiative is different," consider what the research says about everybody else's AI initiative:

95%

of enterprise AI pilots deliver zero measurable P&L impact—despite $30–40 billion in investment. Only 5% of integrated systems create significant value.

Source: MIT Project NANDA, The GenAI Divide: State of AI in Business 2025

80%+

of AI projects fail entirely—twice the failure rate of non-AI IT projects. The #1 root cause: organizations don't understand the problem they're trying to solve.

Source: RAND Corporation, The Root Causes of Failure for Artificial Intelligence Projects, 2024

42%

of companies scrapped most of their AI initiatives in 2025—up from 17% just one year prior. The average organization abandoned 46% of proof-of-concepts before production.

Source: S&P Global Market Intelligence, Voice of the Enterprise: AI & Machine Learning, Use Cases 2025

Three independent research bodies. Three different methodologies. The same conclusion: the problem isn't that AI doesn't work. The problem is that organizations are deploying it without understanding the work it's supposed to do.

And the trend is accelerating. As Fortune reported, "AI fatigue" is settling in as companies watch their proofs of concept fail at increasing rates. The hype cycle promised transformation. The data shows expensive experimentation going nowhere.

The Playbook Is Backwards

The prevailing execution path for AI implementation is fundamentally inverted. It starts with the technology and works backward toward the problem.

Pick a tool. Find a use case. Deploy. Measure. Wonder why it didn't work.

This is a solution looking for a problem, and it's been a failing strategy since long before AI entered the conversation.

Here's what it should look like:

First, understand the reality.

Map how work actually gets done—not the idealized process on the wall, not the flowchart from 2019, but the real, messy, human-driven reality of how value moves through your organization. Who does what. Why. Where things break. Where heroes are compensating. Where tribal knowledge is the only thing holding it together.

Then, design the intent.

What should this process look like when it's working deliberately? What are the decision points? Where does human judgment create irreplaceable value? Where is effort being wasted on tasks that don't require a human brain?

Only then do you decide what to automate.

And the answer is almost never "everything." The answer is the specific, well-understood steps where a machine can execute with precision—because you've defined exactly what precision means.

This isn't slower. It's the only approach that actually works.

The Binary Trap

There's a dangerous false choice driving most AI conversations right now: humans or machines. Replace or don't.

This binary framing is lazy. And it's producing terrible outcomes.

The real question isn't whether to automate. It's what to automate, why, and how it integrates with the humans who remain in the loop.

Because humans are going to remain in the loop. The organizations that figure out the right combination—where machines handle the repeatable and humans handle the judgment—are the ones that will actually see returns on their AI investment.

The ones chasing full automation of processes they don't understand are going to spend a fortune learning what the rest of us already know. (If you want a clearer framework for where AI actually fits, I wrote about the AI opportunity most business owners are missing—it's the companion to this piece. And if you're ready for the one question you must answer before deploying AI, that's the next step.)

You can't outsource understanding.

Not to consultants. Not to vendors. And certainly not to a machine that's only as good as the process description you fed it—which, in most cases, doesn't exist. MIT's research found that more than half of enterprise AI budgets flow to sales and marketing pilots—yet the biggest ROI shows up in back-office automation, where the actual operational work lives. Organizations aren't just failing to understand their work. They're investing in the wrong places because they don't understand their work.

What the Winners Will Do Differently

The organizations that will win the AI era aren't the ones moving fastest. They're the ones moving most deliberately.

They're doing the hard, unglamorous work of understanding their own operations with enough depth and precision that automation becomes an obvious, targeted decision—not a Hail Mary.

They're building their operation maps. Designing their workflows. Defining their steps with the kind of rigor that makes a machine's job easy—because a human already figured out what "good" looks like.

They're not asking "where can we use AI?" They're asking "where does our work actually create value, and what's getting in the way?" The research backs this up—MIT found that the 5% who succeed share a common trait: they embed AI into specific, well-understood processes rather than spraying it across the organization. And RAND's interviews confirmed that successful teams are laser-focused on the problem to be solved, not the technology used to solve it.

The AI question answers itself once you understand the work. It becomes obvious. That step is repeatable and well-defined—automate it. This step requires human judgment and context—don't.

No hype required. No guessing. Just operational clarity applied to operational decisions.

This Is Deliberate Work

Everything I've just described—mapping reality, designing intent, making targeted automation decisions—this is the Deliberate Work methodology.

It's not anti-AI. It's anti-stupid.

It's the recognition that every failed AI implementation shares the same root cause: nobody understood the work before they tried to hand it to a machine. The technology was fine. The foundation was missing.

I've spent 25 years in industrial automation, business operations, and consulting. I've programmed robots on factory floors. I've built and run businesses that scaled. I've watched organizations make this exact mistake—skipping understanding, jumping to solutions—long before AI made it fashionable. The transition from accidental to deliberate is the same regardless of the technology involved.

The pattern is always the same. The technology changes. The failure mode doesn't.

You can't automate what you can't describe. You can't optimize what you don't understand. And you can't scale what's already broken without scaling the brokenness with it.

Understanding comes first. Everything else—including AI—comes after.

Sources & Further Reading

- On enterprise AI failure rates: Challapally, A., Pease, C., Raskar, R., & Chari, P. (2025). The GenAI Divide: State of AI in Business 2025. MIT Project NANDA. Based on 300+ initiative reviews, 52 organizational interviews, and 153 senior leader surveys. Finds 95% of enterprise AI pilots deliver no measurable P&L impact despite $30–40B invested.

- On AI project root causes of failure: Ryseff, J., De Bruhl, B., & Newberry, S. J. (2024). The Root Causes of Failure for Artificial Intelligence Projects and How They Can Succeed. RAND Corporation. Based on interviews with 65 data scientists and engineers. Finds 80%+ of AI projects fail—twice the rate of non-AI IT projects—with misunderstanding the problem as the #1 cause.

- On accelerating AI abandonment: S&P Global Market Intelligence. (2025). Voice of the Enterprise: AI & Machine Learning, Use Cases 2025. Survey of 1,006 enterprises across North America and Europe. Finds 42% of companies scrapped most AI initiatives in 2025, up from 17% in 2024.

- On AI fatigue in the enterprise: Fortune. (2025). 'AI fatigue' is settling in as companies' proofs of concept keep failing. Reporting on rising abandonment rates and the growing disconnect between AI investment and AI outcomes.

- On building deliberate systems: For the complete framework on understanding work before automating it, Deliberate Work covers the methodology in depth. Get on the early access list.

Henry applies this framework in practice. Try it at okhenry.ai.