Most companies don't have an AI problem. They have a systems visibility problem. And AI won't fix it—it'll just make the mess move faster.

The Question Nobody Asks

Here's the question: do you understand your own work well enough to know where AI actually helps?

Most companies never ask it. They move straight to the tool—the platform, the agent, the automation layer—without first looking at the work itself. And that's the trap.

They can't see where decisions are made, who's making them, or why. So when AI arrives with promises of magic automation, the thinking becomes: finally, something that can figure out the mess we've built.

But AI isn't magic. It can't save you from not understanding your own work. And the data backs this up—only 21% of organizations have fundamentally redesigned their workflows before deploying AI. The other 79% are bolting a powerful tool onto a system they don't fully understand.

I've written about this before: AI doesn't fix broken processes—it scales them. This article is the other side of that argument. Not just what goes wrong when you skip the foundation, but what the right question actually is—and what you need to answer it.

The real conversation isn't binary. It's not "do we automate this or not?" It's "at what level of human involvement does this particular step actually work best?"

That's the analog question. And it requires you to know your own system first.

Why Companies Default to All-or-Nothing

Why do companies reach for binary thinking about AI? Three reasons.

First: fear. Everyone's talking about AI replacing jobs, disrupting industries, moving faster than you can keep up. So the thinking becomes: we either get ahead of this wave or we drown in it. Binary fear drives binary decisions.

Second: vendor narrative. The entire AI industry is built on the panacea story. "Our tool will automate your customer service. Our agent will handle your workflows." Nobody is selling you a framework for thoughtful integration because that doesn't fit a two-minute demo. The product they're selling is certainty, not nuance.

Third: it's easier. Understanding your actual work—mapping the steps, seeing where decisions happen, decomposing what's tribal knowledge versus what's repeatable—that's hard. It takes time. It requires honesty about what you don't know. Reaching for AI feels like progress without that work.

But here's what actually happens:

You bolt AI onto a broken system and wonder why it breaks faster. The process you didn't understand is now running at machine speed, and the gaps that were invisible are now impossible to ignore. Except now they're harder to fix because you've added an abstraction layer between your people and the work.

This is the pattern behind every broken operation—tribal knowledge, invisible handoffs, heroic effort—now with AI turbocharged on top of it.

AI gave leaders permission to skip the hard work of understanding their own operations. And the results are exactly what you'd expect.

The Answer to Binary Thinking

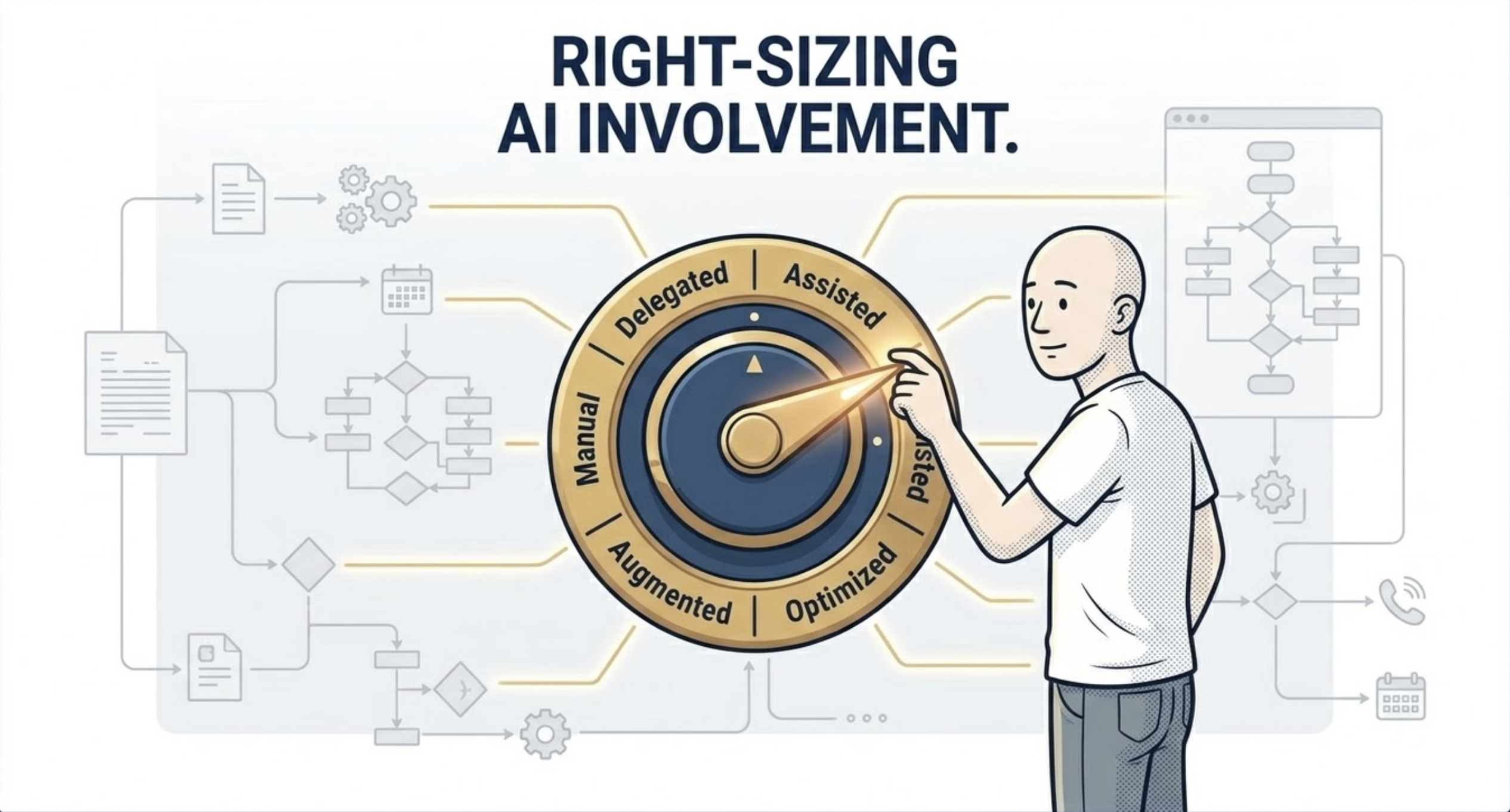

The answer to the binary trap is the Human / AI Handshake. It's not a question of whether to automate. It's a question of how much human judgment stays in the loop at each step.

There are six modes, and each one is valid depending on the work:

Manual

Humans do the work. AI watches, logs, and learns. Full human ownership, no delegation.

Guided

Humans lead the decision. AI surfaces suggestions, flags patterns, and assists—but never drives.

AI-Assisted

AI leads the execution. Humans validate, review, and correct before output moves forward.

Gated Autonomous

AI executes—but only if its confidence passes a defined threshold. Below that, it routes back to a human. No confidence, no action.

Autonomous

AI executes without human involvement. The step is clear, low-risk, and repeatable enough to run without oversight.

External

The work leaves your organization entirely—to a partner, vendor, or platform. Human and AI involvement is someone else's concern.

The key insight is this: these aren't tiers of maturity where you're trying to climb from Manual to Autonomous. They're dials. You set each one based on the actual risk, the cost of error, the nature of the decision, and your capacity to handle judgment calls.

A repetitive, low-stakes task might go Autonomous. A high-stakes customer decision might stay Manual or Guided forever. That's not a failure of AI adoption. That's intelligence. (For a practical walkthrough of how these modes apply to real businesses—plumbers, accountants, consultants—see the AI opportunity most business owners are missing.)

Think you can describe a process clearly enough for a machine to execute? The PBJ Challenge lets you try—instruct a relentlessly literal robot to make a sandwich and see what happens when your assumptions meet zero interpretation. Most people fail. Takes 3 minutes.

The Missing Prerequisite

Here's where most companies fail: they try to place AI on the Handshake dial without actually knowing what the work is. And that's impossible.

You can't intelligently decide whether a step should be Manual, Guided, or Autonomous if you don't first understand what the step actually is.

That's where Deliberate Work enters. Not as an afterthought. Not as something you do after you've already bought the AI tool. As the foundation—a methodology born from two traditions that independently figured out how to transform ordinary performance into extraordinary results.

Step-level decomposition is the prerequisite. It means breaking down your work into atomic units—across every stage of the customer journey—capturing what's true about every step before you touch it:

Intent: What is this step trying to accomplish? What changes because it exists?

Inputs: What does this step actually need in order to execute? What's required, and where does it come from?

Execution: What happens inside this step? Who or what does the work, and how?

Output: What does this step produce? What is handed off, and to what or whom?

Impact: What does a good outcome actually look like? How will you know this step succeeded?

Once you can answer those questions for every step, you can ask the right question about AI: where in this chain does human judgment create value—and where does it create drag?

Where is the step clear enough that an AI can own it? Where is it ambiguous enough that you need human judgment in the loop? Where does a mistake cost you a customer versus a few dollars?

That clarity doesn't come from an AI vendor. It comes from knowing your own work.

Two Examples, Same Mistake

Let's make this concrete.

The Marketing Team

A marketing team gets excited about AI. The plan: use an AI agent to write all their email copy, segment audiences, optimize send times. Full autonomous execution. Sounds like a capability leap.

Until you realize nobody on the team has actually documented what makes an email convert for their specific audience. There's no step-level clarity on why certain messages land. The customer voice, the pain hierarchy, the tone—it lives in one person's head and gets adjusted by instinct every send.

So the AI writes statistically plausible copy that hits nobody. The metrics look fine. The conversions don't come.

Wrong dial setting. They needed Guided or AI-Assisted first—humans defining the intent, the voice, the customer truth, then AI drafting, then humans reviewing and correcting. Over time, as patterns emerge and the system learns, you move the dial toward Autonomous on the low-stakes sends. But you earn that. You don't assume it.

The Customer Success Team

A customer success team wants to automate renewals. They plug in an AI that flags at-risk accounts and triggers outreach sequences automatically.

But the definition of "at-risk" lives entirely in the head of their two best CSMs. Nobody has decomposed what signals actually matter, what the appropriate intervention looks like, or what a good outcome requires at each level of urgency.

The AI flags the wrong accounts. The outreach lands tone-deaf. Renewals drop. The team blames the tool. But the tool never had a chance. The work wasn't visible enough to hand off.

What they needed first:

Map the renewal step. What signals trigger concern? What's the threshold between monitoring and acting?

Define the intervention ladder. What response is appropriate at each level of risk—and who decides?

Separate the repeatable from the relational. Account flagging might go Autonomous. First outreach might be AI-Assisted. The renewal conversation itself might stay Manual—because at that moment, relationship is irreplaceable.

That's not a limitation of AI. That's the right answer. And it only becomes visible once you've done the step-level work first.

Back to the Question

Do you understand your work well enough to know where AI actually helps?

Can you see the steps? Can you name the decisions? Can you separate what requires judgment from what requires repetition?

If the answer is no, AI won't save you. It'll make the mess move faster—at machine speed, with machine consistency, in exactly the wrong direction.

If the answer is yes, then the Human / AI Handshake becomes your operating system. You dial in the right level of human involvement at each step. You optimize for the actual work—not for the vendor pitch, not for the board narrative, not for the fear of falling behind.

That's when AI stops being a panacea and starts being a tool. And tools, wielded by people who understand their own work, are extraordinarily powerful.

Understanding your system is what makes AI useful. The prerequisite—knowing your own work—is the thing that pays.

Now you know what question to ask.

Key Takeaways

- — Only 21% of organizations redesign their workflows before deploying AI. The other 79% are scaling systems they don't understand.

- — All-or-nothing AI thinking is driven by fear, vendor narrative, and the path of least resistance—none of which are strategies.

- — The Human / AI Handshake has six modes. They're dials, not tiers—set per step based on risk, error cost, and decision type.

- — Step-level decomposition is the prerequisite. Intent, Inputs, Execution, Output, Impact—for every step—before you set any dial.

- — AI readiness is earned, not bought. The organizations that win will master the work first. The tool follows.

Sources & Further Reading

- On AI workflow redesign rates: CapTech / McKinsey research (2025). Only 21% of organizations have fundamentally redesigned workflows as they deploy AI—cited as the foundational data point for this article.

- On AI pilot failure rates: MIT Project NANDA (July 2025). 95% of enterprise AI pilots delivered no measurable P&L impact despite $30–40 billion in investment. The #1 root cause: organizations misunderstand the problem they're trying to solve.

- On the scale of AI implementation failures: RAND Corporation (2025). AI project failure analysis. Over 80% of AI projects fail—twice the rate of non-AI IT projects—with misunderstood problem definition as the leading cause.

- On why AI amplifies broken processes: Minock, J. (2026). "AI Doesn't Fix Broken. It Scales It." Deliberate Work. The companion piece establishing why process understanding must precede automation.

- On the boundary between human judgment and AI execution: Minock, J. (2026). "The Human / AI Handshake." Deliberate Work. The detailed treatment of the two planes of human-AI work and how to design the boundary between them.

- On the operational symptoms that make AI deployment fail: Minock, J. (2026). "Something Is Breaking in Your Business Right Now." Deliberate Work. Tribal knowledge, invisible handoffs, and heroic effort—the three symptoms AI accelerates when deployed without step-level clarity.

- On building deliberate systems: For the complete framework, Deliberate Work covers the methodology in depth. Get on the early access list.

TL;DR

Only 21% of organizations redesign their workflows before deploying AI. The other 79% are adding a powerful tool to a system they don't understand—and accelerating their existing problems. The answer isn't better AI. It's step-level decomposition first: understanding intent, inputs, execution, output, and impact for every unit of work, before you decide how much AI belongs in it. That's what makes the Human / AI Handshake dial settable. And that's what separates the 21% from everyone else.

Henry applies this framework in practice. Try it at okhenry.ai.